Writer Maddie Franz talks about why you shouldn’t use generative AI for assignments.

Writer Maddie Franz talks about why you shouldn’t use generative AI for assignments.

Before Nov. 30, students would have to pay someone on a sketchy website to write their research paper for them or use their friend’s essay from last semester if they wanted to avoid doing their work. Now, it’s as easy as typing a prompt into a website.

The release of ChatGPT, a text-generating artificial intelligence program, changed the landscape of cheating on assignments. Like plagiarism, using this technology to write for you cheapens college education and stifles learning.

To counteract this, Loyola held a professional development conference to help professors design class assignments around the limitations of ChatGPT in January, The Phoenix previously reported. AI struggles with current events and making a strong argument, often opting to make information up and keep a hard centrist voice.

It’s disappointing such precautions have to be taken against students cheating by using AI. In high school, it makes more sense to prevent the use of AI. Most students don’t have a choice on whether or not they go to school, and some would rather do anything than complete an assignment themselves.

But college should be different. Students choose to go to college and at Loyola, that is not a cheap endeavor.

Loyola’s unaided tuition for the 2023-24 school year is $50,270, a 4.5% increase from last year, The Phoenix previously reported. Room and board range from $9,000 to more than $12,000 a year, according to Residence Life’s website. With meal plans, books and additional fees, it can cost more than $60,000 a school year to attend.

So why would a student choose to cheapen their education by cheating with AI when they’re going into debt or dipping deep into their parents’ savings? There are a few possible reasons.

One defense may be that the class a student is cheating in isn’t important for their major. It’s a core class or a prerequisite course before the student gets to learn more about their chosen field.

Unfortunately for those students, they made the decision to attend college. Core classes are part of graduation requirements at most universities. If a student only wants to learn things that are relevant to their future career, they could’ve taken a certification and saved themselves tens of thousands of dollars.

While students may think their theology class is boring or their English literature class is pointless, these are parts of an education some people will never get in their lives.

Between 2011 and 2021, the U.S. Census Bureau reported 37.9% of adults had completed a bachelor’s degree or higher. It is a privilege to receive the breadth of education required to graduate from college.

Another excuse for using AI may be the student isn’t confident in their writing abilities. What do these students think school is for?

The only ways to get better at writing are to practice writing and to read more. Your writing won’t improve by letting ChatGPT generate your forum posts or by tweaking a few things in an AI-generated essay.

If students who want to improve their writing take their assignments seriously and take advantage of on-campus resources, they will get better.

For one, nearly every professor will work with students who ask for help with writing. Reviewing a previously submitted assignment allows the professor to give specific feedback on how the student can improve.

Professors aren’t the only source of writing help. The second floor of the Information Commons is home to the Writing Center where student tutors can offer advice, assistance and ideas at every stage of the writing process. Simply schedule an appointment and a tutor will help you out in person or online. Sure, you can ask ChatGPT for writing advice, but a human tutor is so much easier to communicate with.

Abby Palmisano, associate director of the Writing Center, said the Writing Center is useful for writers of all skill levels, including PhD students in English. There is no shame in asking for help.

Some students use ChatGPT as a search engine or research tool. But user beware — ChatGPT is known to return false facts and citations, according to the Associated Press.

Below the text prompt on the ChatGPT website, there is a disclaimer — “ChatGPT may produce inaccurate information about people, places, or facts.”

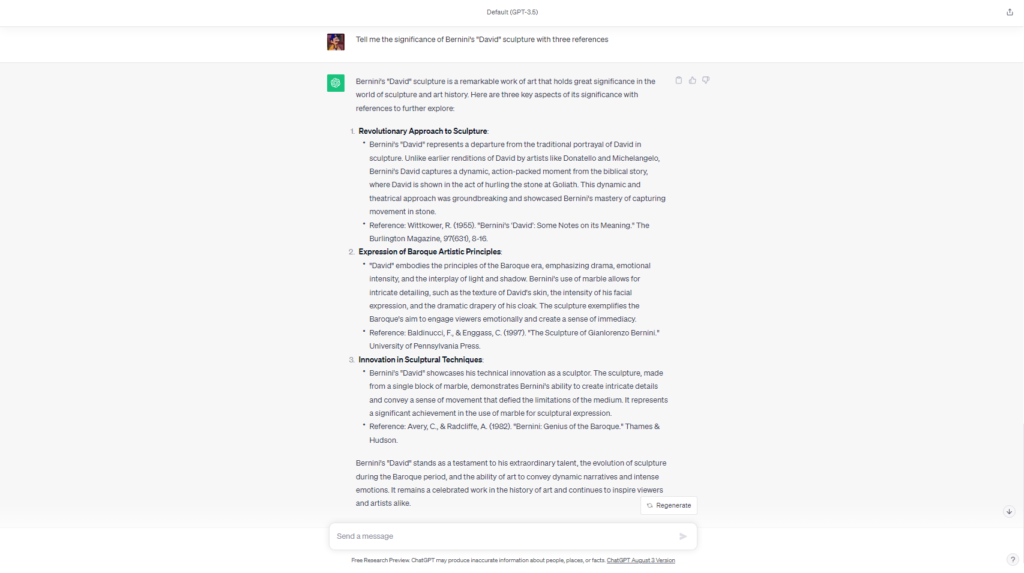

For example, I asked the AI to tell me the significance of Gian Lorenzo Bernini’s sculpture “David” with three references. None of the references generated pointed to real papers or books when cross-referenced with Loyola’s Library.

Rudolf Wittkower published multiple articles on Bernini in Burlington Magazine in the 1950s but didn’t write “Bernini’s ‘David’: Some Notes on its Meaning.” Filippo Baldinucci, credited with writing “The Sculpture of Gianlorenzo Berini” by ChatGPT, did author a book on the sculptor called “The Life of Bernini.”

I tried a scientific example next, asking ChatGPT to tell me the significance of crayfish in the freshwater ecosystems of the Midwest, again with three references.

Two citations were completely made up. David M. Lodge and James W. Fetzner Jr. have both participated in multiple crayfish-related studies, but none by the titles ChatGPT generated.

“Crayfish occupancy and abundance in lakes of the Pacific Northwest, USA” is a real study. The reference had citation errors, though, and the information cited from it doesn’t appear in the study.

The purpose of writing assignments in college is to practice writing. The process is just as important as the result.

AI can be fun to play with. With text and image-generating programs, the possibilities are truly endless — but that doesn’t mean there aren’t limitations. This technology won’t help students develop their skills. If you truly think AI is an acceptable way to complete assignments, ask yourself — why are you even here?

Featured image by Aidan Cahill / The Phoenix